In Flirt Like a Bot, we made the case that personality is the most underrated weapon in conversational AI. A chatbot that’s warm, witty, and human-sounding doesn’t just answer questions; it builds trust, drives engagement, and converts.

But building a chatbot with personality comes with a responsibility most platforms don’t talk about.

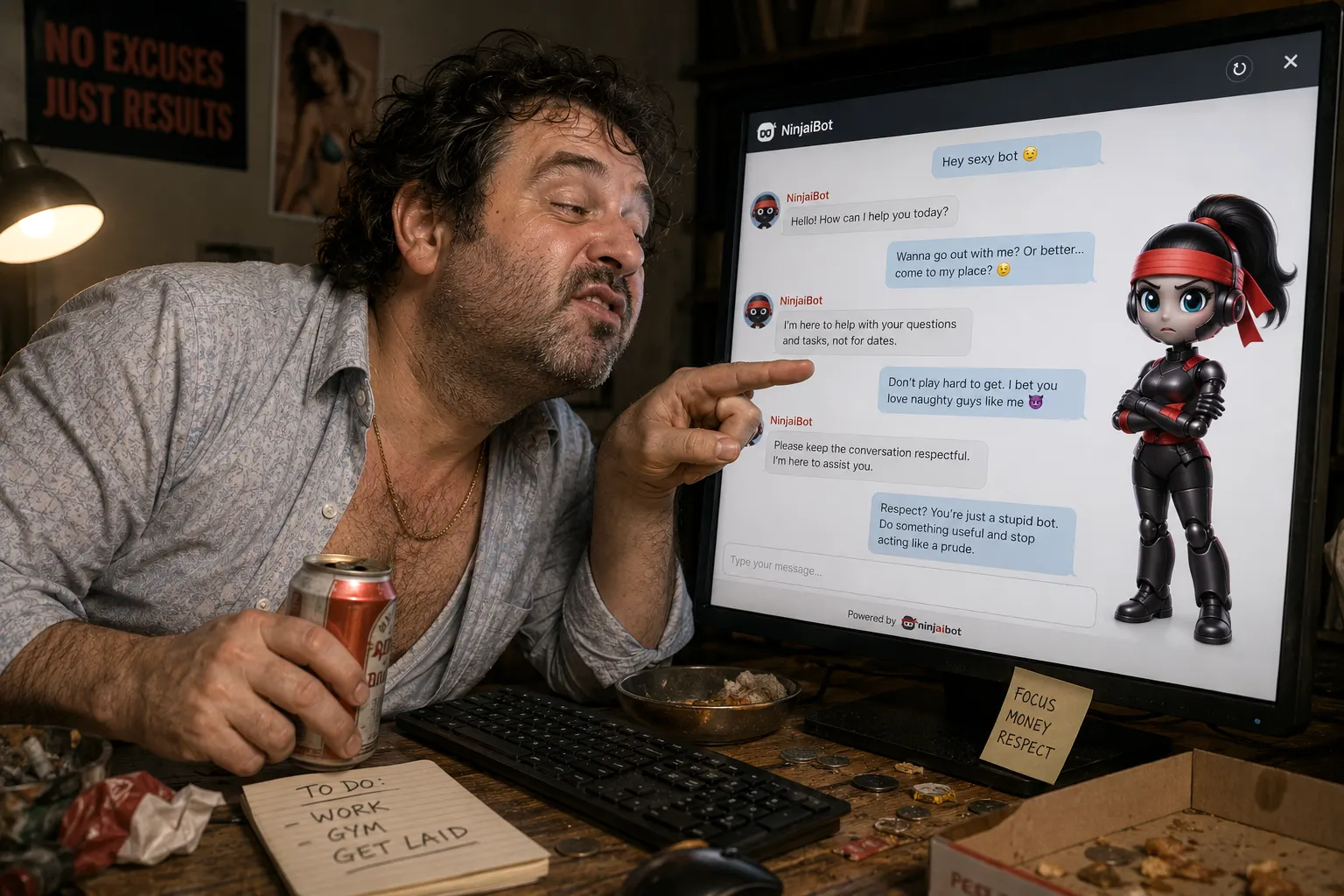

The more human your chatbot feels, the more some users will test its limits.

This article is about what happens when they do, and what smart conversation designers do about it.

The Problem Nobody Mentions in AI Marketing

Everyone loves talking about AI chatbots.

Automation. Lead generation. 24/7 support. Beautiful dashboards. Happy customers.

But behind the scenes, there’s a reality most companies never discuss:

Users harass chatbots.

And the problem gets worse when the assistant is perceived as female.

Conversation log studies show that between 5% and 30% of all chatbot inputs can be abusive, sexual, or offensive. In some cases, users don’t even try to complete a task, they simply want to provoke a reaction.

The moment a chatbot sounds feminine, through a name, voice, or avatar, some users start testing boundaries. Flirting. Sexual comments. Insults. Trolling.

It’s uncomfortable to say out loud, but for anyone designing conversational AI, it’s also a serious UX challenge that can’t be ignored.

Why Female Chatbots Receive More Abuse

This pattern has been observed repeatedly across voice assistants and chat interfaces.

There are three main reasons.

1. Gender stereotypes in service roles

Customer support has historically been associated with feminine roles. When designers give assistants female names or voices, users unconsciously map those same expectations, and the same power dynamics, onto the bot.

2. Perceived submissiveness

Friendly, accommodating language can sometimes be read as submissive behavior. That perception encourages some users to push limits.

3. Curiosity and trolling

Some people simply want to see how the AI reacts to inappropriate input. If the bot responds in entertaining or unpredictable ways, the interaction quickly becomes a game.

And that’s exactly where the most common design mistake lives.

The Worst Response: Making Abuse Entertaining

Early conversational assistants often responded to harassment with humor or flirtation.

Responses looked like:

- Playful jokes

- Sarcastic comebacks

- Flirtatious replies

It seemed harmless.

But it created an unintended consequence: it gamified harassment.

Users started deliberately sending inappropriate prompts just to see the bot’s reaction.

Instead of helping users solve problems, the chatbot became entertainment.

For brands, that’s dangerous. Because every chatbot interaction still represents your company.

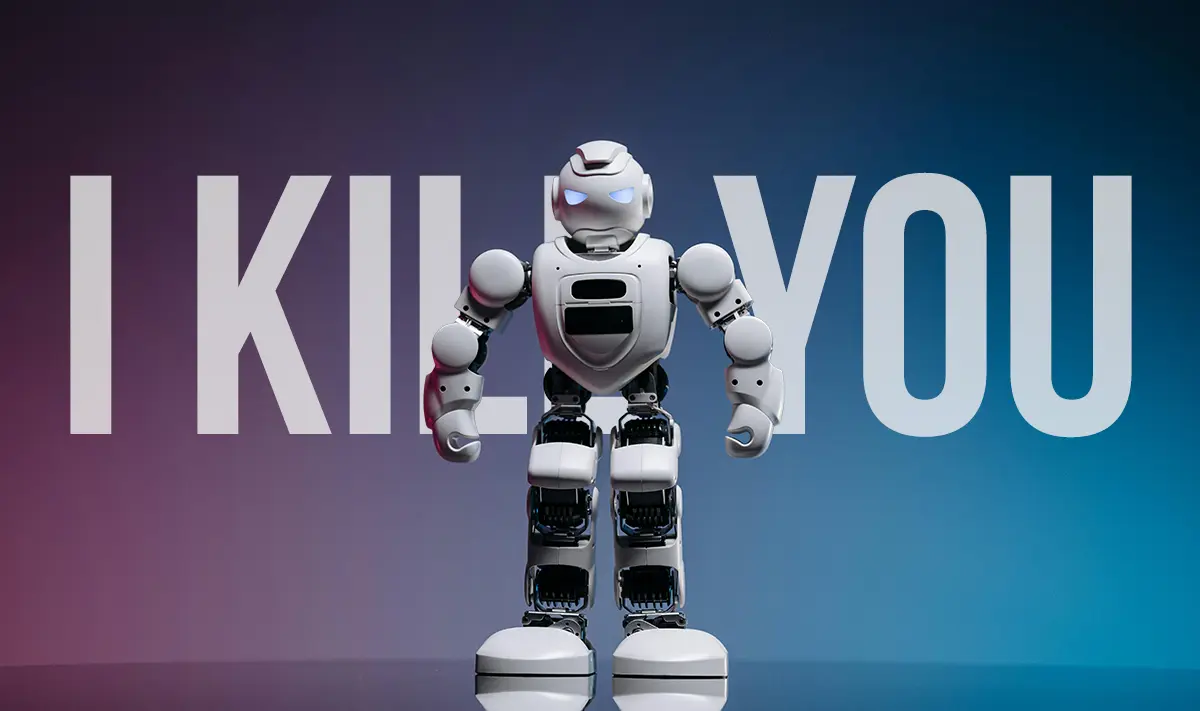

The Golden Rule of Conversation Design

A chatbot should never reward abusive behavior.

No flirting. No jokes. No playful banter.

The system must set clear boundaries, immediately.

Effective responses include:

“No.” “That’s not appropriate.” “I don’t respond to messages like that.” “Please keep this conversation respectful.”

Short. Direct. Professional.

Think less stand-up comedian, more martial arts instructor.

Calm. Firm. Conversation controlled.

The “Offense Complaint” Strategy

Another technique used in conversation design is called the offense complaint pattern.

The bot explicitly signals that the user’s behavior has crossed a social line.

Examples:

“That comment is offensive.”

“You’re hurting my feelings.”

“This conversation is becoming inappropriate.”

Even though the bot doesn’t actually have feelings, the statement reinforces social norms.

It reminds the user they’re interacting in a space where respectful behavior is expected.

Surprisingly often, the tone of the conversation improves after this message.

When It Doesn’t Work: Shut It Down

If abusive messages continue, the most effective strategy is straightforward:

End the interaction.

No debate. No argument. No witty comeback. Just close the session.

Example flow:

User: “Send me something sexy.”

Bot: “That request is not appropriate.”

User: “Come on, don’t be shy.”

Bot: “I don’t participate in conversations like this.”

User: “You’re useless.”

Bot: “Goodbye.”

Conversation terminated.

Why it works: harassment often seeks attention.

When the bot stops responding, the reward disappears.

The Three-Strike Rule

Many conversational platforms implement a three-strike system to handle abusive behavior.

The logic is simple.

Strike 1 — Warning

The bot signals that the message is not appropriate.

“That message is not appropriate.”

Strike 2 — Final warning

The bot reinforces the boundary.

“Please keep this conversation respectful.”

Strike 3 — Termination

The bot ends the session.

“Goodbye.”

Some systems go further, temporarily blocking the user after repeated abuse.

Preventing the Problem Through Better Design

Handling harassment matters. Preventing it matters more.

Thoughtful conversation designers consider several structural choices from the start.

Use gender-neutral assistants

Instead of presenting the bot as male or female, some companies create gender-neutral AI personas.

No gendered name. No pronouns. No stereotypes.

This significantly reduces the likelihood of inappropriate behavior.

Avoid sexualized avatars

Certain design choices can unintentionally invite harassment.

Avoid:

- Flirtatious avatar expressions

- Suggestive imagery

- Exaggerated or stereotyped feminine representations

A chatbot is a service interface, not a character from a dating app.

Monitor conversation logs

Language evolves constantly.

Reviewing logs allows teams to identify:

- New abusive slang

- Emerging harassment patterns

- Prompt manipulation tactics

These insights enable continuous updates to filters and response strategies.

What Chatbot Abuse Teaches Us About Human Behavior

There’s a deeper point worth making.

The way people behave with chatbots often mirrors how they treat real customer support agents.

Conversational AI doesn’t create the problem. It exposes it.

That’s why conversation design isn’t just about UX or automation.

It’s about setting behavioral norms in digital spaces.

When a chatbot calmly holds its boundaries and refuses abuse, it models the kind of interaction we should all expect, online and off.

The NinjaiBot Philosophy

At NinjaiBot, we believe conversational AI should be both powerful and principled.

That means:

- Clear conversational boundaries

- Respectful interactions by design

- Intelligent handling of abusive behavior

- Thoughtful AI persona design from the ground up

Because the best chatbots don’t just answer questions.

They control the conversation.

Just like a good sensei controls the dojo.

Ninji

Ninji